Most discussions about AI visuals still focus on generation. People talk about prompts, model quality, and how quickly a tool can turn a sentence into an image. But for many real users, that is not the most important part. The more valuable question is often much simpler: how do you improve an image you already have?

That is where Image to Image starts to feel more useful than flashy. It shifts the role of AI from “invent something for me” to “help me transform what I already made, captured, or planned.” That may sound like a small difference, but in practice it changes the entire experience. It makes the workflow feel less like gambling on prompts and more like making decisions inside a creative process.

- The Old Problem With Starting From Nothing

- What Makes This Workflow Feel More Practical

- Why It Feels More Like Direction and Less Like Luck

- A Better Match for How Teams Actually Work

- Why Model Choice Matters More Here Than People Think

- The Hidden Value of Having a Reference

- Where This Workflow Has the Most Real-World Value

- What Users Still Need to Do Well

- Why This Approach Feels More Durable Than Hype-Driven AI Features

The Old Problem With Starting From Nothing

Text-to-image is powerful, but it often creates a strange kind of distance between intention and result.

You may know exactly what you want in your head, but the model still has to guess. It guesses the mood, the framing, the facial structure, the styling, the product shape, the background logic, and the visual tone. Sometimes it guesses well. Sometimes it misses in a way that looks impressive on the surface but wrong underneath.

That is why many users end up rewriting prompts again and again, trying to recover a vision that was already clear to them from the beginning.

Image-to-image solves that problem in a more grounded way. Instead of asking AI to imagine the starting point, you provide the starting point yourself.

What Makes This Workflow Feel More Practical

The biggest strength of image-to-image is not novelty. It is efficiency.

When you already have a usable source image, the creative task becomes easier to define. You are no longer trying to create everything at once. You are choosing what to preserve and what to change.

That could mean keeping a face but changing the outfit.

It could mean keeping a product but changing the scene.

It could mean keeping the composition but changing the style.

It could mean keeping the overall idea but improving the finish.

This is a much more realistic way to work. Most creative tasks in the real world do not begin with emptiness. They begin with a draft, a reference, a rough asset, or a visual that is close but not quite there yet.

Why It Feels More Like Direction and Less Like Luck

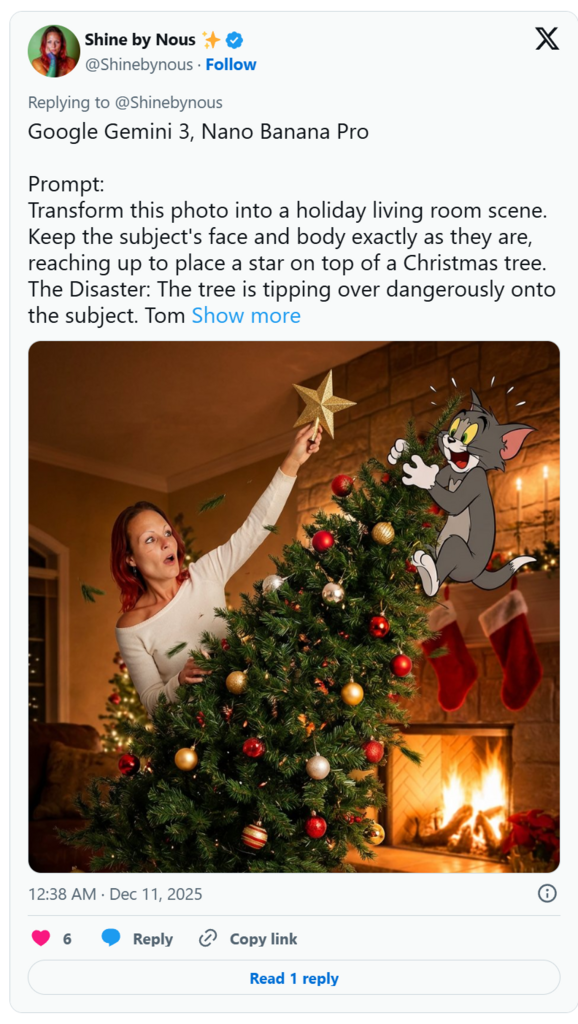

A useful way to understand image-to-image is to think of it as AI-assisted visual direction.

You are not just asking for output. You are guiding transformation.

That makes the process feel more intentional because the source image already carries information the model can use. It contains proportion, layout, subject identity, object shape, lighting cues, and other visual signals that text alone would struggle to describe precisely.

This is why image-to-image often feels calmer than pure prompt generation. You are not fighting for control in every single attempt. You are beginning with structure.

For creators, that can reduce wasted time.

For marketers, it can reduce inconsistency.

For designers, it can reduce the need to rebuild similar ideas from scratch.

A Better Match for How Teams Actually Work

One reason this workflow stands out is that it fits the way modern content production actually happens.

A brand team may already have existing campaign photos but need new variations.

A founder may have rough product visuals but want something more polished.

A creator may have one strong portrait and want multiple aesthetic directions for different platforms.

An artist may want to push a sketch into a more finished visual language without abandoning the original composition.

In each of these cases, image-to-image is not just convenient. It is structurally better suited to the task.

That is what makes it more than a technical feature. It becomes part of a repeatable workflow.

Why Model Choice Matters More Here Than People Think

A platform that offers image creation is one thing. A platform that gives users different model options for different visual tasks is much more interesting.

That distinction matters because image-to-image is not one single behavior.

Sometimes you want a result that stays very close to the original.

Sometimes you want a dramatic restyle.

Sometimes you want stronger realism.

Sometimes you want quick drafts.

Sometimes you want finer control over small edits rather than broad reinterpretation.

A multi-model setup makes those choices more meaningful. It suggests that the workflow is not built around one rigid output style, but around matching the tool behavior to the kind of transformation you need.

That is especially important for users who care less about AI hype and more about whether the result is actually usable.

The Hidden Value of Having a Reference

People often underestimate how much easier creative decision-making becomes once a reference image is involved.

A reference does more than guide the model. It also clarifies your own judgment.

When you compare outputs against a base image, you can see more clearly what improved, what drifted, and what should be rejected. The process becomes easier to evaluate because you are not judging an isolated image in a vacuum. You are judging a change.

That is a major advantage. It turns AI from a purely generative system into something closer to a visual revision partner.

And in many professional or semi-professional settings, revision is far more valuable than invention.

Where This Workflow Has the Most Real-World Value

The practical value of Toimage AI becomes especially obvious in certain use cases.

Content expansion

One base image can lead to multiple styles, formats, or moods without forcing you to rebuild the entire concept from zero.

Product presentation

A simple product shot can be transformed into a more polished, themed, or campaign-ready visual.

Visual consistency

When a person, object, or design language needs to stay recognizable across outputs, starting from a source image gives you a much stronger foundation.

Concept refinement

If an image is already close to good, image-to-image is often the fastest path to making it better.

What Users Still Need to Do Well

Of course, this workflow does not eliminate the need for judgment.

A strong result still depends on three things.

First, the source image has to give the model something readable to work with.

Second, the instruction has to be clear enough to separate what should stay from what should change.

Third, the user still needs taste. AI can produce many options quickly, but it cannot decide which version best serves a brand, a story, a campaign, or a creative goal.

That is why image-to-image works best when treated as a refinement tool rather than a magic button.

Why This Approach Feels More Durable Than Hype-Driven AI Features

A lot of AI features look exciting for a moment and then lose their value once the novelty wears off. Image-to-image feels different because it is tied to an ongoing need: people constantly need to revise visuals, not just generate new ones.

That is why this workflow has staying power.

It aligns with how creative work already functions. People rarely move in a straight line from idea to final asset. They adjust. They refine. They compare. They keep part of the original and improve the rest.

Seen that way, image-to-image is not just another generation mode. It is a bridge between human intent and AI execution. And that is exactly why it feels less like a trick and more like a tool people can keep using long after the first wow moment is gone.