The industry-wide obsession with “instant” content has created a fundamental misunderstanding of how generative tools actually function in a professional pipeline. We often hear that AI collapses the production schedule from weeks to seconds. While technically true for a single 5-second clip, the reality for a creative director or a marketing lead is more complex. The introduction of an AI Video Generator doesn’t necessarily shorten the workday; it shifts the cognitive and temporal load from manual execution to iterative curation.

In the traditional model, the bottleneck was labor—the hours spent keyframing, masking, or setting up lighting rigs. In the generative model, the bottleneck is the review cycle. When you can produce ten variations of a scene in the time it used to take to render one frame, the challenge moves from “how do we make this?” to “which of these actually aligns with the brand’s visual grammar?” This transition requires a complete rethink of production velocity.

- The Deconstruction of Pre-Production

- The New Review Cycle: From "Rough Cut" to "Prompt Refinement"

- Managing the Consistency Gap

- Operationalizing Throughput vs. Latency

- The Delivery Bottleneck: Resolution and Upscaling

- Integration into the Existing Pipeline

- The Skill Shift: From Craftsman to Curator

- Conclusion: The Reality of the "Fast" Future

The Deconstruction of Pre-Production

Historically, pre-production was a linear path: script, storyboard, moodboard, and perhaps a low-fidelity animatic. This phase was designed to minimize risk because the cost of “getting it wrong” during the shoot or the high-res render phase was astronomical. You couldn’t just “try a different lens” once the set was struck.

With the advent of high-fidelity AI Video Generator tools, the moodboard and the animatic are merging into a single, fluid process. Producers are now using image generators to establish the aesthetic and then immediately porting those assets into video engines to test motion. This isn’t just about speed; it’s about the democratization of high-fidelity prototyping.

However, there is a distinct limitation here that many operators overlook: the lack of precise spatial control. While you can describe a camera movement, the current generation of tools often hallucinates physics when pushed into complex geometric maneuvers. You might get a stunning cinematic sweep, but the objects within the frame may morph in ways that defy the laws of gravity. In a professional setting, this means the “velocity” gained in generation is often lost in the repetitive attempts to get a single clean take that doesn’t break immersion.

The New Review Cycle: From “Rough Cut” to “Prompt Refinement”

The traditional review cycle is a series of gates. You have the assembly, the rough cut, the fine cut, and finally, the picture lock. Each gate is an opportunity for stakeholders to provide feedback. In a workflow centered around an AI Video Generator, these gates become much more porous.

Because the cost of “shooting” a new scene is effectively the cost of a few compute credits, stakeholders are often tempted to ask for infinite variations. This “infinite optionality” can actually paralyze a production. If a director can see twenty different versions of a character walking through a neon-lit rainstorm, the decision-making process slows down.

Professional teams are combatting this by treating the AI as a high-speed engine for “disposable iterations.” You use the AI Video Generator to fail fast. Instead of spending three days on a physics simulation that might not work, you spend thirty minutes generating ten variants to see if the concept has legs. The “velocity” here is found in the ability to discard bad ideas before they consume significant resources.

Managing the Consistency Gap

One of the most significant hurdles in maintaining production velocity is the “Character Consistency Problem.” In a traditional shoot, the actor looks the same in every shot because they are the same person. In a generative workflow, maintaining a consistent face, outfit, and lighting across twenty different prompts is a technical challenge that requires more than just a good prompt.

Creative operators are currently bridging this gap through a hybrid approach. They might use an AI Video Generator for the background and atmospheric elements, then overlay traditionally filmed or 3D-rendered characters. Or, they utilize “LoRA” (Low-Rank Adaptation) models to train the AI on a specific person or product.

Even with these workarounds, it is important to reset expectations regarding “one-click” production. We are currently in a period of “visible caution.” While a tool can generate a beautiful 1080p clip, stitching those clips into a cohesive 60-second narrative requires a level of manual oversight that AI hasn’t automated yet. The “glue” between shots—matching the lighting temperature, the grain, and the motion blur—is still the domain of the human editor.

Operationalizing Throughput vs. Latency

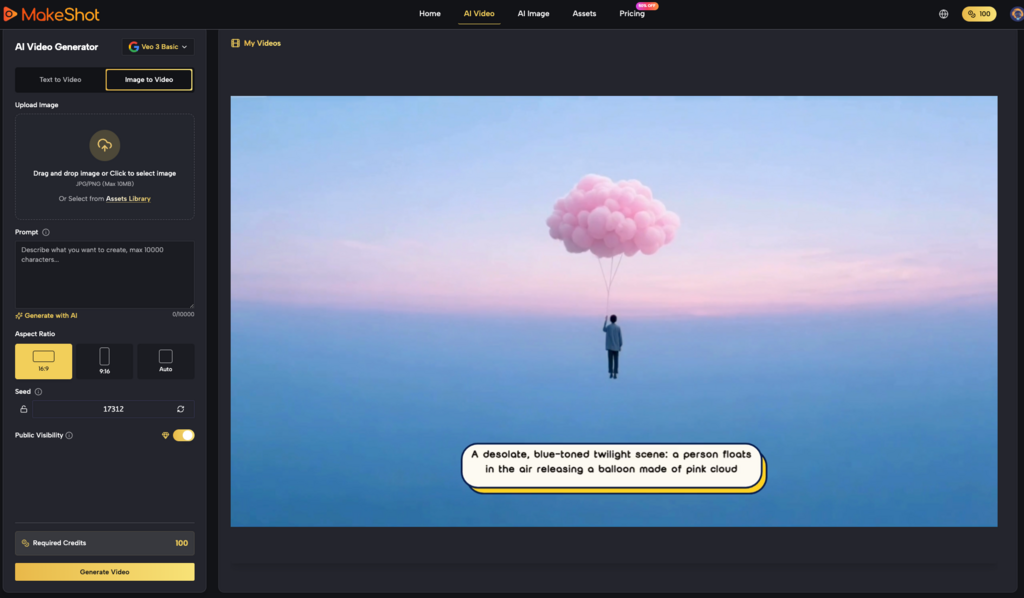

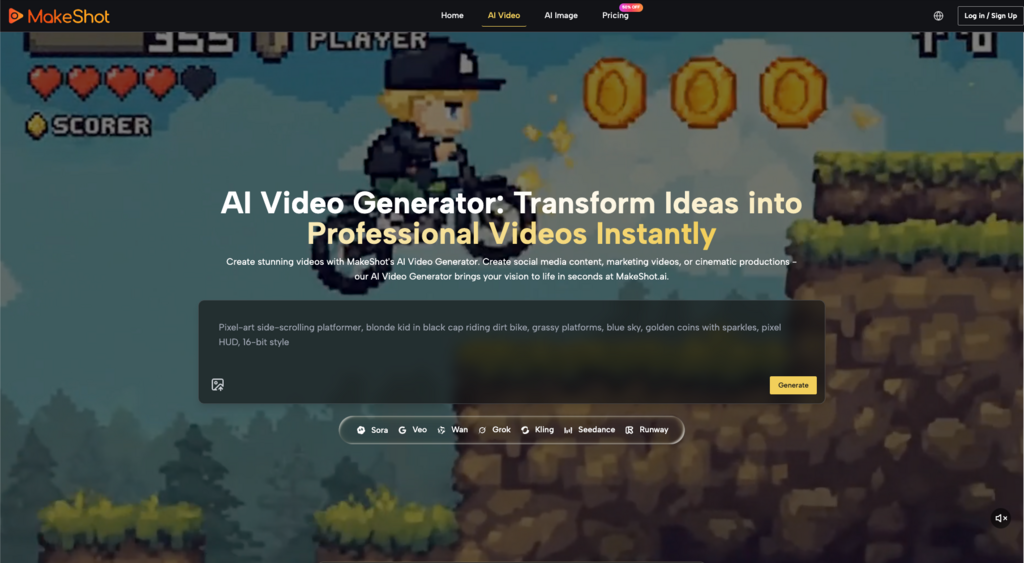

When evaluating tools like those found on MakeShot, it is helpful to distinguish between throughput (how much you can make) and latency (how fast a single item is made). An AI Video Generator excels at throughput. You can feed it a CSV of 100 prompts and get 100 videos back by lunch. This is a massive win for performance marketers who need to A/B test 50 different ad creatives.

For cinematic production, however, latency and precision matter more. The time it takes to refine a single prompt to get the exact “hand gesture” or “eye contact” required for a dramatic beat can be significant. In some cases, it might actually be faster to film a practical plate than to fight with a seed number for four hours. Understanding when not to use AI is just as important for production velocity as understanding when to use it.

The Delivery Bottleneck: Resolution and Upscaling

Most generative models currently output at resolutions that are insufficient for 4K broadcast or large-scale digital out-of-home (DOOH) advertising. This creates a secondary production step: upscaling and enhancement.

A typical workflow might look like this:

- Generate low-res base footage using an AI Video Generator.

- Pass the footage through a temporal stabilizer to fix flickering.

- Use a dedicated AI upscaler to bring the resolution to 4K.

- Color grade in a tool like DaVinci Resolve to ensure brand alignment.

This “post-generative” phase is where the professional-grade results are actually made. If you ignore this phase, the output often has that tell-tale “AI sheen”—a lack of micro-contrast and a slightly plastic texture. True production velocity includes the time it takes to move an asset through this entire chain, not just the time the “Generate” button is spinning.

Integration into the Existing Pipeline

The most successful creative teams aren’t replacing their entire stack with an AI Video Generator; they are treating it as a new plugin within their existing ecosystem.

For instance, a motion graphics designer might use AI to generate “texture plates” or “environmental backgrounds” that would have taken hours to paint in Photoshop or render in Cinema 4D. They then bring these AI-generated assets into After Effects to serve as the foundation for their typography and UI overlays. This hybridity preserves the precision of traditional tools while leveraging the speed of generative ones.

The uncertainty here lies in the legal and ethical landscape. Production velocity can be brought to a grinding halt by a legal department’s concern over training data or copyrightability. This is why many studios are leaning toward platforms that offer transparency regarding their models or those that allow for the use of proprietary, “clean” datasets.

The Skill Shift: From Craftsman to Curator

As the “how” of making video becomes more commoditized, the “what” and the “why” become the primary value drivers. The role of the video editor is evolving into something closer to a “creative technologist.” You need to know which AI Video Generator handles human anatomy best, which one excels at fluid dynamics, and which one has the most stable temporal consistency.

This shift requires a new type of literacy. It’s no longer enough to know the shortcut keys for a trim tool; you need to understand the latent space of these models. You need to know how to “nudge” a model through negative prompting or how to use a “control net” to guide the structure of a scene.

Conclusion: The Reality of the “Fast” Future

We are moving toward a world where the friction between an idea and a moving image is negligible. But “no friction” does not mean “no work.” The velocity gained by using an AI Video Generator is being reinvested into higher volume and higher complexity.

Instead of delivering one “perfect” hero film for a campaign, teams are now expected to deliver a personalized video for every audience segment. The work hasn’t disappeared; it has simply changed shape. We have moved from an era of scarcity (where we didn’t have enough footage) to an era of abundance (where we have too much footage to sort through). Navigating this abundance with practical judgment and a grounded understanding of the tools is the only way to turn the “hype” of generative video into a sustainable, professional reality.

The goal isn’t just to make video faster. The goal is to use that saved time to make the stories we tell more resonant, more targeted, and more visually ambitious than what was possible when we were limited by the manual speed of the keyframe. Using an AI Video Generator is the first step, but the final mile still belongs to the editor with the eye for detail.