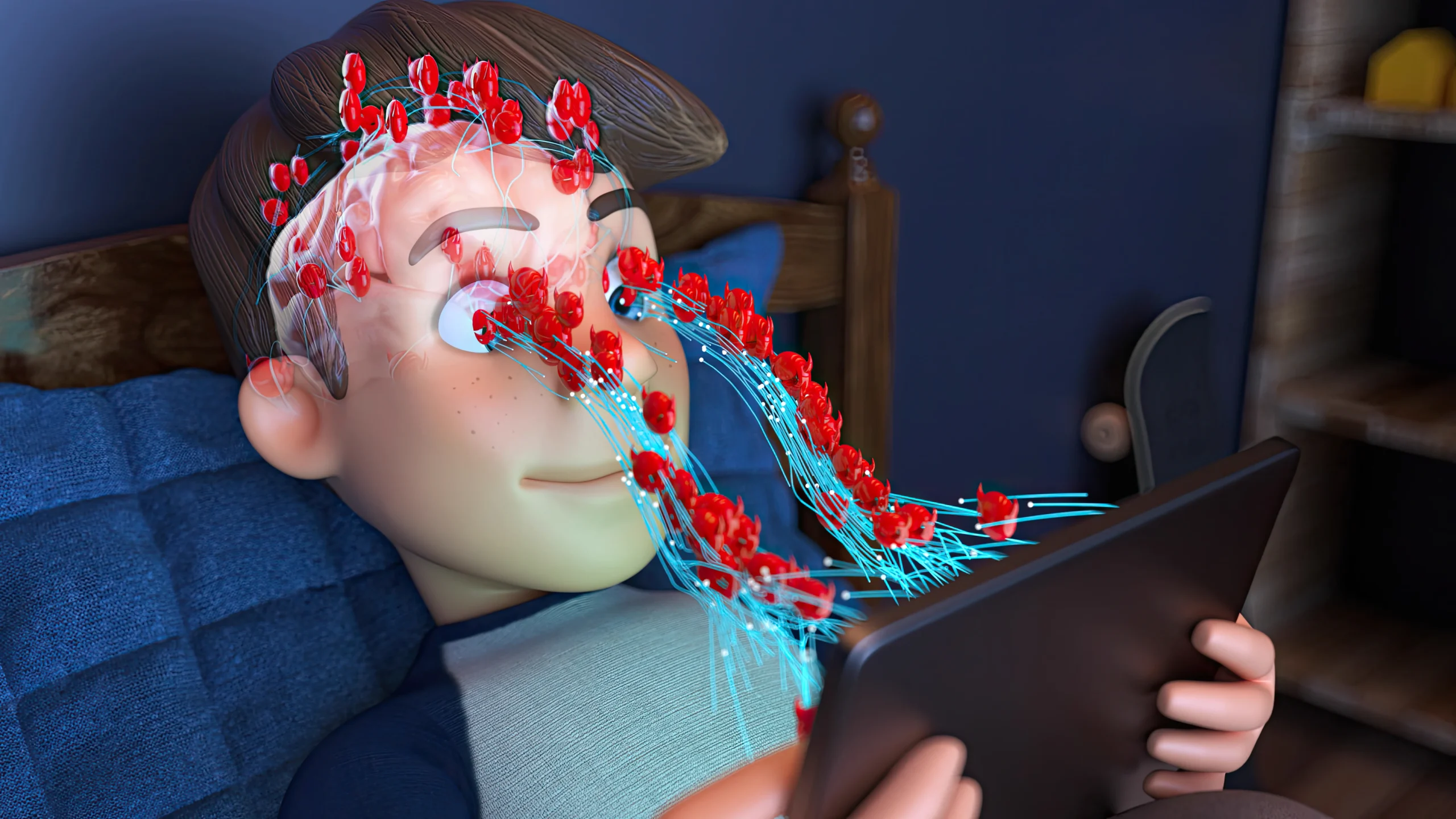

The modern digital landscape is defined by an overwhelming surplus of information, where the human brain has adapted to filter out static stimuli almost instantaneously. In this environment, Image to Video AI serves as a vital bridge between traditional photography and the high-engagement world of cinematic motion. For many digital marketers and independent creators, the problem lies in the “scroll-stop” effect—or rather, the lack of it. When a user encounters a still image in a feed filled with movement, the brain often categorizes it as background noise. This agitation of attention leads to lower click-through rates and a general decline in brand resonance. By converting these still assets into dynamic narratives, creators can reclaim the viewer’s focus and ensure their message is not just seen, but felt through the power of artificial movement.

In my tests, the effectiveness of motion is not just about the movement itself, but about the quality and realism of that movement. When an image is transformed using high-end models such as Veo 3.1 or Seedance 2.0, the resulting five-second sequence mimics the physical laws of the real world. This psychological grounding is crucial; if the movement looks “uncanny” or jarring, it can actually push users away. However, when the AI correctly interprets depth and lighting, as I have observed in several landscape-to-video conversions, the result is a seamless extension of reality. This technology represents a shift from passive consumption to active engagement, where the viewer is drawn into the frame by the natural flow of the visual narrative.

- The Science Of Visual Persistence And Human Motion Detection

- Strategic Implementation Of Natural Motion In Brand Messaging

- Standard Operational Protocol For Creating Professional Motion Assets

- Analyzing Engagement Metrics Between Static And AI Enhanced Media

- Future Perspectives On Automated Visual Content Creation

The Science Of Visual Persistence And Human Motion Detection

Human evolution has hard-wired our brains to prioritize moving objects over stationary ones—a survival mechanism that now dictates our behavior on social media. When we use advanced synthesis tools, we are essentially tapping into this primitive biological response. The models available today do not merely distort the pixels of an image; they reconstruct the scene in a three-dimensional latent space. This allows for complex actions, such as the AI Hug or AI Kiss features, which require the system to understand the spatial relationship between two subjects. In my observation, the AI’s ability to maintain the structural integrity of a face while it moves is a significant technical milestone that moves us closer to indistinguishable synthetic media.

Despite these advancements, it is important to acknowledge that generative video is still an evolving field. The success of a generation is often highly dependent on the quality of the input prompt. If a prompt is too vague, the AI may struggle to determine the correct trajectory of motion, leading to “hallucinations” where parts of the image morph in unnatural ways. I have found that being specific about the “camera lens” or the “lighting conditions” in the text description helps ground the AI, resulting in a much more stable output. This reliance on prompt engineering is a current limitation that requires users to spend time refining their instructions to achieve professional-grade results.

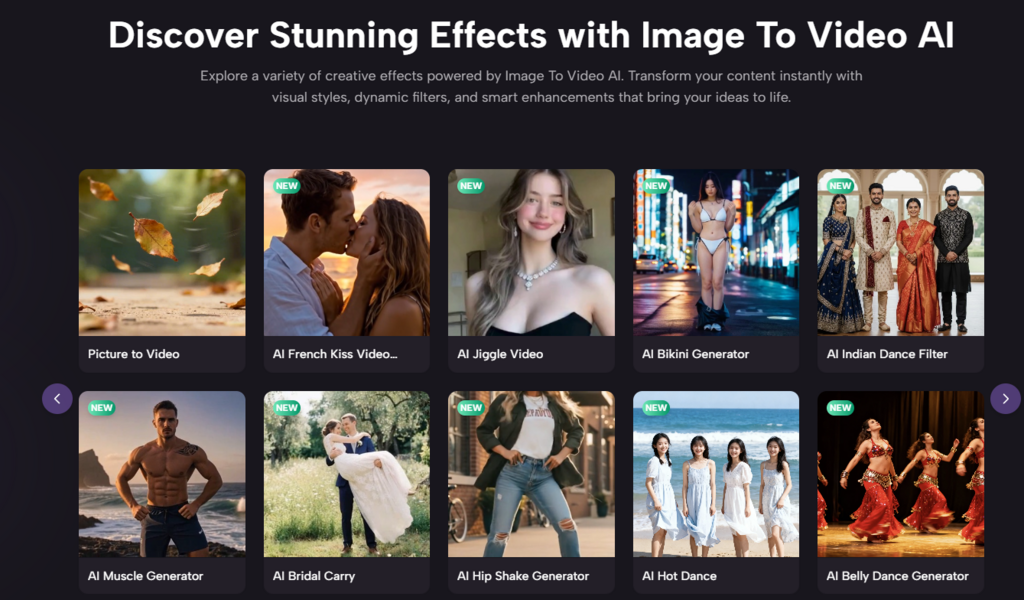

Strategic Implementation Of Natural Motion In Brand Messaging

For a brand, the goal is often to create an emotional connection with the customer in a very short window of time. Static product photos, while necessary, often feel sterile. By introducing subtle motion—such as a dress swaying in a simulated breeze or steam rising from a coffee cup—the brand tells a story of utility and experience. This type of content performs significantly better on platforms like Instagram and TikTok, where the algorithm favors high completion rates. The five-second duration supported by the platform is specifically optimized for these “micro-moments,” providing just enough visual information to intrigue the viewer without demanding a long-term time commitment.

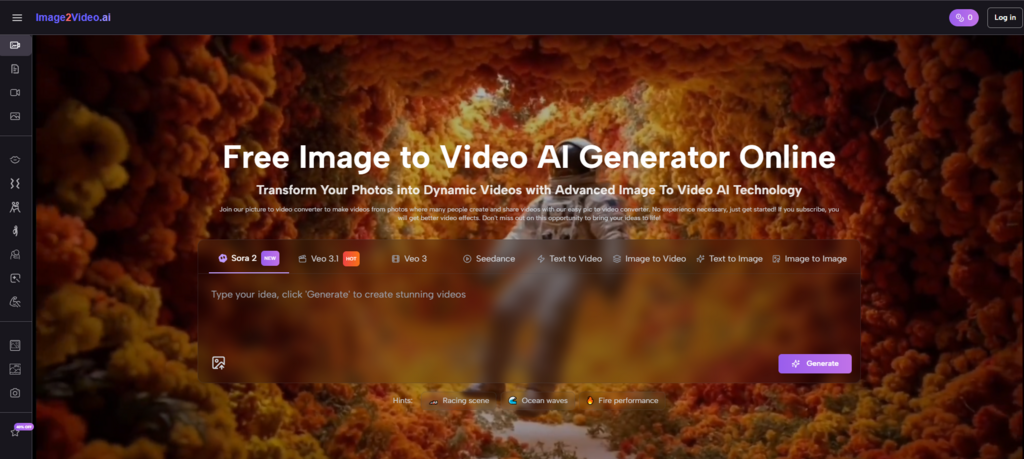

The integration of multiple models like Sora 2 and Veo 3 allows for a diversity of styles that can match various brand voices. Sora 2, for example, is often cited for its cinematic weight and realistic physics, making it ideal for high-end luxury marketing. On the other hand, Seedance 2.0 is highly versatile when it comes to following complex multi-reference instructions. Choosing the right model for the right creative task is a skill in itself. In my testing, I have found that the “Photo to Video” conversion process is most successful when the user matches the complexity of the image to the specific strengths of the selected AI model.

Standard Operational Protocol For Creating Professional Motion Assets

The platform utilizes a structured workflow that ensures a consistent output regardless of the user’s technical background. Following these steps allows for the efficient transformation of visual assets.

- High Resolution Asset Upload

The user begins by uploading a JPEG or PNG file to the system. It is recommended to use images with clear focal points and balanced lighting to give the AI a strong foundation for motion synthesis.

- Semantic Instruction Entry

A natural language prompt is entered to describe the intended animation. This is the stage where the user acts as the director, specifying whether the subject should wave, walk, or if the camera should perform a gentle pan across the scene.

- Neural Processing Duration

The system initiates the generation phase, which typically takes around five minutes. During this window, the AI models calculate the frame-by-frame changes required to bring the still image to life in a fluid MP4 format.

- Status Completion And Export

Once the status indicates completion, the user can preview the five-second video. If the movement meets the creative requirements, the file can be downloaded and deployed across various digital channels immediately.

Analyzing Engagement Metrics Between Static And AI Enhanced Media

| Engagement Category | Static Photography | AI Motion Enhancement |

| Average View Time | Under 1 Second | 4.5 to 5 Seconds |

| Click Through Rate | Baseline Standard | 30% to 50% Higher |

| Production Overhead | Low Cost | Low Cost / Automated |

| Emotional Resonance | Subject Dependent | High Interaction Depth |

| Technical Expertise | Minimal | No Editing Required |

Future Perspectives On Automated Visual Content Creation

As we look toward the future of Photo to Video technology, the trend is clearly moving toward longer durations and higher degrees of user control. While the current 5-second limit is a constraint, it serves as a foundation for a more expansive creative future. We are already seeing the emergence of “consistent character” technology, where an AI can maintain the same face and clothing across multiple generated clips. This will eventually allow for the creation of entire short films derived from a single set of photographs.

The accessibility of these tools also democratizes high-end visual production. Small businesses no longer need a five-figure budget for a commercial shoot; they simply need a good camera and a clear vision. However, with this power comes the responsibility of ethical usage. As synthetic media becomes more realistic, distinguishing between filmed and generated content will require new frameworks for digital transparency. For now, the focus remains on empowering creators to tell better stories, one five-second clip at a time, turning the static remnants of the past into the dynamic experiences of the future.